Searching for:

Search results: 111 of 1123

People

Olivia Hughes

Olivia works closely with several of our AoE teams, including Climate, Nature, and Equity, Inclusion and Justice. She provides administrative and research support, while also securing cross-functional team collaboration opportunities. Prior to joining BSR, Olivia held government positions with the United States Secret Service, the Office of Senator Kirsten Gillibrand,…

People

Olivia Hughes

Preview

Olivia works closely with several of our AoE teams, including Climate, Nature, and Equity, Inclusion and Justice. She provides administrative and research support, while also securing cross-functional team collaboration opportunities.

Prior to joining BSR, Olivia held government positions with the United States Secret Service, the Office of Senator Kirsten Gillibrand, and the New York State Assembly.

Olivia has a BA in Political Science with minors in Public Policy and History from Hobart and William Smith Colleges.

Blog | Thursday September 28, 2023

BSR’s Climate Journey Toward Net Zero

As a mission-driven sustainable business network, we focus on impact in everything we do. Learn more about the steps we’re taking as an organization to stay in line with our core beliefs and achieve net zero.

Blog | Thursday September 28, 2023

BSR’s Climate Journey Toward Net Zero

Preview

With 2030 quickly approaching, global climate commitments must move toward swift action to halve emissions and reach global 2050 net-zero goals. At BSR, we work with our membership network to deliver credible action to keep 1.5°C within reach, while also maximizing synergies with nature; human rights; and equity, inclusion, and justice.

While we focus on sustainability impact in all the work we do with our members, like all organizations, our day-to-day operations have an environmental impact. It is important we hold ourselves to the same standard we expect from members, and recognize this opportunity to share openly about our climate journey. We have therefore set a climate target for our organization and embarked on a journey to deliver on it.

Our Climate Target

Using BSR’s 2021 GHG footprint as the baseline, BSR commits to:

-

A near-term (2030) target, to reduce total Scope 1 (own operations) Scope 2 (energy source) emissions by 50%, and our top emitting total Scope 3 (value chain) emissions by 42%.

-

A long-term (2040) target, to reduce total Scope 1&2 emissions by 90%, our total Scope 3 emissions by 90%, and to reach net-zero by neutralizing residual emissions with permanent removals.

Starting in 2023, we are applying a carbon price to our Scope 1, 2, and 3 emissions, and using the funds to invest in climate solutions that contribute to global net zero beyond our value chain. In 2023, we have decided to use the US Government Social Cost of Carbon as a reference.

For more information on BSR’s GHG emissions data and climate targets, please visit Sustainability at BSR.

Climate Goal Development Process

We believe impactful work and a credible climate target and implementation plan go together. The SBTi Net Zero Standard is the most robust standard currently available, and although as a non-profit we cannot officially commit to the SBTi, we are committing to a target that follows SBTi’s guidance.

BSR’s climate goal and implementation plan are grounded in four key principles:

-

A clear vision on transformative climate leadership.

-

Learning from peers’ climate ambitions to ensure alignment with best practices.

-

Aligning with the recommendations provided to BSR members and demonstrating a commitment to “walking the talk”.

-

Leveraging the transformative power of BSR’s unique membership network model as a catalyst for broader change.

BSR’s climate goal development process began with a comprehensive greenhouse gas footprint from an external expert. We then participated in one-to-one discussions on the learnings and challenges of developing a climate strategy with non-profit peers, and benchmarked industry counterparts and partners before forming and testing the feasibility of multiple climate goal options in alignment with the SBTi Net Zero Standard. The creation of the implementation plan included a review of our emissions data to understand BSR’s leverage to influence and reduce Scope 3 emissions, our largest source of emissions—largely due to our contractors and professional services use.

The whole process was validated by BSR’s internal experts, leadership team, and the CEO to ensure 1) internal operational and financial support and 2) alignment with business needs to continue our mission.

Implementation and Beyond

Climate commitments have true value when accompanied by a robust implementation plan with specific milestones. The delivery of our climate targets starts in 2023 and is supported by three timebound task forces:

-

Renewable energy, heating, and green offices: Propose strategies to reduce offices’ GHG footprint and engage staff in sustainable practices at the office—including green IT and waste reduction—both with considerations for the impact of home working.

-

Policy development: Include climate considerations in travel and contractor engagement policies to reduce emissions from two of our largest sources of Scope 3 emissions—business travel and purchased services.

-

Beyond value chain mitigation strategy: Develop and deliver BSR’s strategy to invest in climate solutions from now and define parameters for our long-term strategy to invest in carbon removals.

A key aspect of our implementation plan is the use of the social cost of carbon to fund our investments in climate solutions that contribute to global net zero beyond our value chain. We hope that this unique approach can inspire others to ground climate investment estimations in amounts that reflect the real-world damage to people and the environment.

Although BSR is a small organization with a small GHG footprint on a global scale, we believe that our current climate goal will bring positive impact and help us take responsibility for our impact. In parallel, we are working on ways to activate the great potential for GHG emissions reduction with our members.

BSR’s implementation plan consists of collaboration across departments, engagement with partners and BSR members, and continuous iteration. We have climate goal implementation taskforces involving different functional teams on climate action and will work with partners and BSR members to reduce GHG emissions together. While we are still figuring out how to address our main Scope 3 hotspots, such as our contracted services and partners and business travel, we are not waiting until we have a perfectly developed strategy to act.

Leading by Example

BSR acknowledges the ambitious nature of our net zero target. As a small organization, we have limited leverage on our Scope 3, and do not have all the solutions now nor the resources of the large companies with which we work. However, we remain steadfast in our commitment to playing our part in achieving global net-zero and being an example of how SMEs can prioritize sustainability.

This pledge reflects BSR's core identity as a sustainability organization, the urgency of the climate crisis, and our attitude to “walking the talk” with transparency, humbleness, ambition, and a commitment to sustainable business practices.

Achieving climate goals is a journey, and BSR remains dedicated to regularly reviewing our climate strategy, open communication on our progress, and continuous improvement, including expanding our sustainability efforts to address other important issues in the future.

Blog | Wednesday September 27, 2023

Making Connections Across Professions

With an acceleration of alignment among initiatives, guidelines, and standards over recent years, BSR staff share their thoughts going forward.

Blog | Wednesday September 27, 2023

Making Connections Across Professions

Preview

One of BSR’s three core principles for putting our mission into action is to “make connections between issues.” Recent developments in the field of just and sustainable business are re-enforcing the importance of this principle.

BSR has long emphasized that addressing impacts on the environment, people, enterprise value, and the economy requires holistic approaches.

However, this point of view has not always been matched by the various initiatives, guidelines, and standards that shape day-to-day work of the just and sustainable business field. These have often been the realm of specialist and distinct professional communities, such as those in human rights, social justice, climate change, and sustainability reporting.

Encouragingly, there has been an acceleration of alignment among initiatives, guidelines, and standards over recent years. We’re optimistic about these developments and believe they will be sustained by a deliberate joining up of these previously separate domains.

Examples of greater connection between the fields of reporting and disclosure, business and human rights, diversity, equity and inclusion (DEI), social impact, and climate change are increasingly common.

The “impact materiality” principle in the new European Sustainability Reporting Standards (ESRS) clearly states that “the materiality assessment of a negative impact is informed by the due diligence process defined in the international instruments of the UN Guiding Principles on Business and Human Rights and the OECD Guidelines for Multinational Enterprises.”

The ESRS and the new IFRS Sustainability Disclosure Standard General Requirements both adopt and build on the four-part “governance – strategy – risk – metrics” framework first introduced by the Taskforce on Climate Related Financial Disclosures (TCDF).

The climate disclosure standards recently published by the IFRS and ESRS are significantly ahead of their social counterparts and serve as a holistic model for other issue-specific standards to build from.

However, achieving this progress in practice requires that we address some challenging methodological questions and spread best practices across professions. For example:

-

How do we take the concepts of scope (number of people), scale (gravity of harm), and remediability (ability to reverse harm) that are well-understood in the field of human rights and apply them to environmental impacts such as climate change, as is required by the ESRS and GRI?

-

Given they both now use the same prioritization criteria, should previously separate materiality and salience assessments be combined? If so, how, and what are the risks and opportunities of doing so?

-

How do we ensure that we are addressing inequities in outcomes and opportunities, and promoting diversity, equity and inclusivity in systems and infrastructure designed to address environmental impacts?

-

How can best practices in DEI and human rights due diligence inform new methods of environmental due diligence and support integrated due diligence efforts?

-

How do expectations for accuracy, reliability, and comparability of quantitative data transfer to the often more qualitative realm of social impacts?

-

Are quantitative forward-looking targets always the gold standard, or are other approaches (such as qualitative or normative statements of ambition) sometimes more appropriate?

-

How might scenario analysis, common in the climate change field, improve the quality of DEI and human rights due diligence and understanding of social impact across the value chain?

-

How do we undertake DEI and human rights due diligence of the energy transition?

-

How can we work with boards, executive management, and sustainability experts to facilitate greater collective understanding, action and governance of material risks, opportunities, and their impact on long term business value?

-

How do we effectively engage affected stakeholders in all our efforts without causing significant fatigue?

We know that the answers to these questions and many more will only be resolved by multi-disciplinary teams taking collaborative approaches and addressing shared challenges together.

Many of our company members are joining up functions and collaborating among finance, risk, compliance, supply chain, strategy, DEI, and sustainability teams to apply the various new requirements of impact materiality in a combined corporate approach.

And we at BSR are equally joining experts from our teams on projects together and sharing insights across different functions and teams to better partner with our member companies.

We look forward to opportunities to collaborate with other organizations—outside counsel, large consulting companies, and audit firms—in multi-vendor arrangements where we can achieve more together than we could alone.

Making connections between issues is easy to say, but hard to do in practice. However, this moment demands nothing less in our mission to work with business to create a just and sustainable world.

Blog | Tuesday September 26, 2023

Why an Impactful Sustainability Strategy needs Collaboration

Companies are petitioned every day to join new partnerships and collaborations, and it can become overwhelming. How might a sustainability team make strategic decisions about which collaborations to join, and justify the time and expense?

Blog | Tuesday September 26, 2023

Why an Impactful Sustainability Strategy needs Collaboration

Preview

When discussing BSR’s Collaborative Initiatives with members, we are often asked “What is the value proposition? Is it worthwhile?”

The immediate value proposition, or the “service” that collaboration can offer in the near term is an insufficient measure of the deeper strategic value that comes from engaging in collaboration for sustainable development. Collaboration is the only means by which companies can meet their long-term business and sustainability goals, where these goals cannot be achievable independently.

Nonetheless, we frequently see participation with short-term aims, and left off longer term corporate strategic roadmaps.

At BSR, we believe that the lasting value of contributing to collaborative efforts is like that of a Research & Design function: when done well, the catalytic mix of co-investment, collective creativity, problem-solving, and cross-sector relationship development can lead to entire systems change that enables markets to continue to thrive, more sustainably.

For example, members of Action for Sustainable Derivatives initially came together to improve transparency of palm oil derivatives down to farm and field level, and due to the strength of the collaboration have expanded its ambition to create deeper impacts, e.g. launching a social impact fund in partnership with strong local non-profits, with momentum toward further innovation in the future. Examples of the application of collective muscle for systemic leverage are evident across multiple collaborations such as RISE (Reimagining Industry to Support Equality), a recent merger of collaborative initiatives working for female equality in the textile industry.

Companies are petitioned every day to join new partnerships and collaborations, and it can become overwhelming. How might a sustainability team make strategic decisions about which collaborations to join, and justify the time and expense? And how can the company tap into the deeper, strategic value that collaboration can add to their sustainability journey?

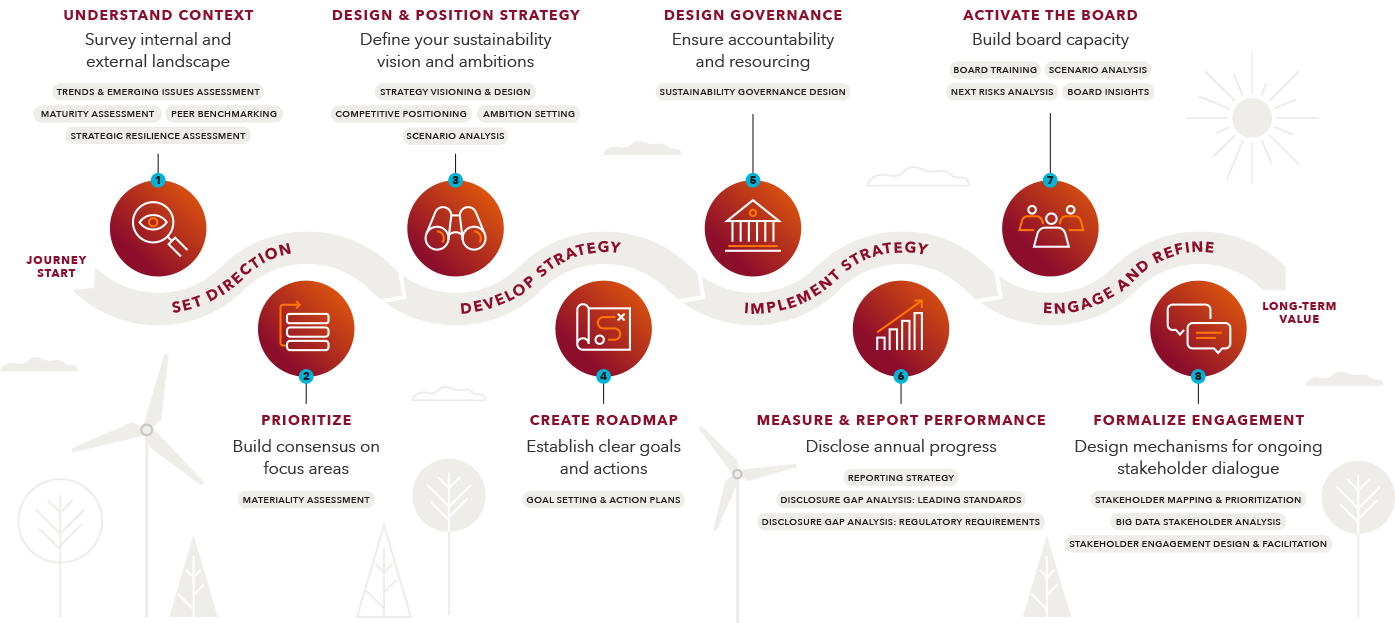

We encourage every company to consider collaborative opportunities across the entire sustainability strategy journey. The following chart includes key considerations for a sustainability team across each step to inform, align with, and contribute to their broader strategy through collaboration.

Key recommendations and considerations for high-impact collective action when setting sustainability goals include:

Set Direction

Are we part of the right collaboration? Are there ways to leverage collaborations to achieve greater influence on issues and risks where the company has limited influence?

-

Consider tapping the expertise of your collaboration to continuously scan for emerging sustainability risks and opportunities to better understand the current environment.

-

Don’t overlook the value of industry or issue area working groups that explore emerging issues—these can be valuable, and ongoing sources of information for corporate strategic planning.

-

Encourage the company’s representatives to meet on a regular basis to share learnings and updates, inform internal teams on agreements made, and brainstorm new approaches.

Develop Strategy

When setting sustainability goals, are we thinking individually or systemically? How might we better align our individual corporate goals and targets with that of the collaborative initiative? Do we have a rubric to evaluate and prioritize the collaborations that we participate in?

-

It is tempting to establish sustainability goals that are achievable by the company alone, with little external engagement required. But where business transformation is required, systemic engagement is needed as well.

-

Consider offering collaborations that directly align with the company’s sustainability strategy more time, resources, and expertise, to establish ambitious goals, and to accelerate shared objectives.

-

Study a collaboration’s theory of change, which points to its long-term objectives and the means through which the collaboration expects to achieve them. Consider how the company’s sustainability strategy might complement or even directly contribute to the collaboration to progress the collective effort. (If a collaboration is not able to articulate its theory of change, this may be an indication that it is not a strong partnership).

Implement Strategy

Are we assigning the right leaders to participate in collaborations, and equipping them to make decisions on behalf of the company? Are we resourcing critical collaborations sufficiently to meet our shared goals? How might we establish accountability for collaborative results?

-

Assign a senior-level decision maker to lead engagement with priority collaborations. Ensure that the company has multiple contacts with critical collaborations, sharing learning and supporting the company to maintain relationships through periods of team transition.

-

Acknowledge the value of relationship building within the collaboration process, and the value the relationships can bring to the company in the long term. Make time and resources available for in-person convening and in-depth relationship development and work.

-

Proactively funnel resources, expertise, and autonomy in decision-making to the company’s collaboration leaders, to enable them to move fast, react in real time, and drive forward your shared goals.

Engage and Refine

Which collaborative efforts are working? Are there any sustainability issues that we are not yet collaborating on, and if so, why not? Are we actively engaging in our collaborations to provide feedback on their strategy?

-

A company may engage in many different kinds of collaborations and partnerships, knowing that a few will lead to outsized impacts, while others will not be as successful. A strategic approach to collaboration includes active portfolio management to responsibly prune those that no longer meet the company’s needs, and to add fuel to those that show promise.

-

Participate in providing meaningful and timely feedback to the collaboration’s secretariat, ensuring they are well-informed about the company’s needs and ideas for impactful activities.

Collaboration is an impactful tool that many companies are only in the early stages of understanding how to use strategically, not only to achieve their own goals but also to transform a wider system. Having a well-defined collaboration strategy and investing in the skills of employees to participate in collaborative initiatives in ways that maximize a company’s investment, are key factors for success.

Please contact the Collaborations team at BSR with any queries about how we can assist you in integrating collaboration into your corporate sustainability strategy.

People

Laura Dugardin

Laura supports BSR’s work on equity, inclusion and justice topics. Prior to joining BSR full-time, Laura interned and was a contractor for BSR and RISE, on the topics of Human Rights and Women’s Leadership & Advancement. Laura also worked as a consultant for the Danish Institute for Human Rights on…

People

Laura Dugardin

Preview

Laura supports BSR’s work on equity, inclusion and justice topics.

Prior to joining BSR full-time, Laura interned and was a contractor for BSR and RISE, on the topics of Human Rights and Women’s Leadership & Advancement. Laura also worked as a consultant for the Danish Institute for Human Rights on Baseline Assessments practices leading to National Action Plans on Business & Human Rights. She conducted research with the University of Geneva on the integration of children’s rights in Swiss-based companies. Laura also has experience with policy work, including on Gender and Corruption for the G20. She extensively engaged on youth participation in democracy, including at the Council of Europe, in grassroot organizations or through self-led projects.

Laura holds a MA in International Development from Sciences Po Paris, and a first-class honors BA in Business and Latin American Studies from EDHEC Business School. Laura is fluent in English, French and Spanish.

People

Marion Fischer

Marion is responsible for managing and developing BSR’s Collaborative Initiatives (CI) portfolio—providing dedicated support to the management of the portfolio as well as administrative, operational and event planning, and logistics support to key BSR CI staff and CIs across the portfolio. Prior to joining BSR, Marion worked for the International…

People

Marion Fischer

Preview

Marion is responsible for managing and developing BSR’s Collaborative Initiatives (CI) portfolio—providing dedicated support to the management of the portfolio as well as administrative, operational and event planning, and logistics support to key BSR CI staff and CIs across the portfolio.

Prior to joining BSR, Marion worked for the International Institute for Strategic Studies, a think tank in international affairs and global security, managing events and providing programmatic and administrative support to their Washington DC office. Previously, Marion worked for Greenflex in Paris, a consulting company in sustainable development, where she worked to foster and manage relationships with clients and their stakeholders and analyze human rights violations on clients' supply chains.

Marion holds a Master’s degree in French and common law from the University of Nanterre. She also graduated with an LLM from Boston University and is a member of the New York Bar. Additionally, Marion holds a Master's degree in International Project Management from ESCP Europe.

Insights+ | Monday September 18, 2023

The Evolving Reporting Landscape

The Evolving Reporting Landscape

Sustainability FAQs | Thursday September 14, 2023

Generative AI

This FAQ sets out BSR’s perspective on generative AI and its implications for ethics and human rights.

Sustainability FAQs | Thursday September 14, 2023

Generative AI

Preview

Introduction

This FAQ sets out BSR’s perspective on generative AI and its implications for ethics and human rights. It offers guidance to both the developers of generative AI (e.g., technology companies) and the deployers of generative AI (e.g., both technology and non-technology companies) for ensuring a responsible, safe, and rights-respecting approach to developing and deploying generative AI systems.

Definitions

What is Generative AI?

Generative AI is a type of machine learning, a field of computer science focused on building systems that learn patterns and structures in existing data. Generative AI models are trained on massive amounts of data, which enable models to recognize patterns and structures in the data or understand meaning in words or phrases. Generative models use this knowledge to generate new content in the form of text, image, audio, video, code, or other forms of media in response to user prompts. Commonly known generative AI technologies include chatbots, such as Chat GPT and Google Bard, and image-generating technologies, such as Adobe Firefly and Midjourney.

Some generative AI models rely on a training method called reinforcement learning through human feedback (RLHF). This method relies on human labelers to assess generative AI outputs for their quality. In their evaluation of model outputs, labelers may consider accuracy, toxicity (e.g., misinformation, hate speech, etc.), and/or adherence to specified principles (e.g., non-discrimination, political neutrality, reducing bias in outputs, etc.). This feedback is incorporated into the model to improve model performance and to prevent models from generating harmful outputs.

Risks of Generative AI

Where do risks in relation to generative AI exist in company value chains?

Risks exist pertaining to both (1) the design, development, training, and optimization of generative AI models, and (2) the deployment of generative AI models.

Are generative AI risks relevant for the long or short term?

Risks relating to generative AI exist in both the near-term and the long-term. Companies should focus on current and near-term concerns, while maintaining sight of potential long-term impacts associated with the adoption of generative AI at scale; addressing real adverse impacts in the near-term will help companies, governments, and society prepare for long-term impacts.

What are the main risks pertaining to design, development, training, and optimization of models?

Some of the main categories of near-term risk are as follows.

-

Coded bias: Generative AI models may be trained on datasets which reflect societal biases pertaining to race, gender, or other protected characteristics. Generative AI models and future technologies trained on generative AI foundation models may reproduce the biases encoded in the training data.

-

Linguistic limitations: Generative AI models are currently not available in all languages. Furthermore, there may be discrepancies in model performance across languages and dialects in which models are currently available. Linguistic limitations may lead to increased digital divides for speakers of low-resource languages or linguistic minorities.

-

Low interpretability: Generative AI models may have low levels of interpretability, meaning it is challenging to determine with full transparency how models make the decisions they make.1 While techniques are being developed to increase transparency of AI decision-making, low interpretability creates current and near-term risks.2 Low interpretability may pose challenges for diagnosing and correcting issues in the model. For example, if a model were consistently producing inaccurate or discriminatory outputs, it may be hard to understand why it is doing this, making it challenging to correct the issue. This increases the potential for harm when deploying models in high-risk contexts, such as autonomous driving or criminal justice.

-

Implementing changes to models may be time-consuming: For models which rely on RLHF, the process of improving model outputs or implementing changes at scale may be time-consuming. While techniques are being developed to automate this process, the reliance on human labelers creates current and near-term risks.3 For example, the issue of a model producing harmful outputs may not be quickly addressable, and those harmful outputs may be widely disseminated, increasing their potential to create adverse impacts.

1 Interpretable Deep Learning: Interpretation, Interpretability, Trustworthiness, and Beyond

2 Interpretability in Deep Learning

3 Constitutional AI: Harmlessness from AI Feedback

What are the main risks pertaining to deployment of models?

Some of the main categories of near-term risk are as follows.

-

Harms associated with jailbreaking: Jailbreaking, in this context, is when a user breaks the safeguards of a generative AI model, enabling it to operate without restrictions. This allows the model to produce outputs that may otherwise violate its programming. As generative AI models are deployed in increasingly high-risk settings, jailbreaking models may come with increased consequences or potential to cause harm. For example, jailbreaking models integrated into military technology may have severe impacts.

-

Harmful outputs: Even with safeguards in place, generative AI models may produce outputs which are inaccurate, biased, nonrepresentative, plagiarized, unsourced, not relevant to the prompt, or otherwise harmful. The severity of the consequences associated with harmful outputs depends on the context in which the model is deployed. For example, for models deployed in the context of medical diagnosis, inaccurate outputs may be associated with greater adverse impacts.

-

Harmful end uses and model manipulation: Generative AI models may be deployed for harmful end uses, and models may be manipulated to behave in harmful ways or to conduct harm at scale. For example, generative AI may be used to design and execute sophisticated and convincing scams or to spread disinformation rapidly at scale.

-

Privacy: Generative AI has implications on privacy including concerns pertaining to the collection and storage of data, how data may be resurfaced by models, how models may make accurate (or inaccurate) inferences about a user, or how outputs may re-identify users from anonymized data.

Do open or closed source generative AI models present greater risk?

There is active debate about the risks and benefits of “open versus closed source” generative AI models. Open-source AI models are made freely available to the public for distribution, modification, and use; closed source models are not widely nor freely available to the public, with access, distribution, and use typically controlled by the organization or company that developed them.

Open-source models may enable contributions, research, and knowledge-sharing from diverse groups of stakeholders, but may also increase the potential for misuse, abuse, or the amplification of bias or discrimination.

Closed-source models may provide greater control and protection against model misuse or abuse, but this may come at the expense of limiting transparency and the ability of researchers, developers, and the public to understand and assess the underlying data, algorithms, and decision-making processes of the model.

What are the main long-term risks and large-scale impacts?

Some of the main categories of longer-term risks are as follows, though many of these are also happening today. This FAQ does not cover longer-term existential questions (e.g., is AI a threat to the future of humanity) since they are largely irrelevant for business decision making today and divert attention away from more pressing risks.

-

Worker displacement or the devaluing of labor: The adoption of generative AI across industries may be associated with the displacement of workers, increased unemployment, the devaluing of certain types of labor, and the exacerbation of wealth disparity. The labor market will need to evolve in response to the deployment of generative AI models across industries, and displaced workers may have to develop new skills or transition into new opportunities or roles.

-

Proliferation of misinformation: The deployment of generative AI models may contribute to the proliferation of mis- /disinformation, particularly due to the technology’s capability to produce high volumes of “credible” content quickly. This may be salient during elections and key periods of civic engagement or unrest. The creation and spread of mis- /disinformation is likely to evolve, necessitating a mix of effective technical mitigations (e.g., content labeling, cryptographic provenance) and social mitigations (e.g., media literacy).

-

Widespread distrust of media and the truth: The proliferation of highly realistic synthetic media (audio, image, video, etc.) may affect public perception of media. In an age when “seeing is no longer believing,” this may impact the ability of communities and societies to agree on what is truthful and real.

-

Negative health outcomes: Engaging with generative AI models, or harmful content produced by generative AI models, may have impacts on mental health outcomes of users including increased instances of mental illness and self-harm. Generative AI models may amplify problems of targeted harassment, bullying, or false impersonation online, and some individuals or groups may be subject to harassment or bullying over others.

Is this a complete list of risks?

No. Generative AI may pose risks to several other areas including: indigenous people’s cultural and/or property rights (models may plagiarize original works by indigenous artists or writers); rule of law (mis-use of generative AI in the criminal legal system); peace and security (the proliferation of synthetic mis- /disinformation depicting national security threats may result in real-world harms); digital divide (inequitable access to generative AI and the benefits it engenders may exacerbate the digital divide across regions and languages); and others.

Opportunities of Generative AI

What are the main opportunities associated with generative AI?

There are potential opportunities for nearly all industries and society more broadly. These opportunities do not offset the risks (e.g., all risks should still be addressed even if benefits outweigh them) but do provide a rationale for the pursuit of generative AI.

-

Creation of meaningful work opportunities: Generative AI models can be deployed to augment human activities and contribute to the development of new jobs or opportunities. If generative AI technologies are used to complement human labor or create new tasks or opportunities, then demand for labor may increase and / or higher quality jobs may be created.

-

Increases in productivity and innovation: Generative AI has been hailed for its ability to drive transformation and productivity and may be the basis of widespread technological innovations. Increases in innovation and productivity may be associated with broadly shared prosperity and increased standards of living.

-

Detection of discriminatory or harmful content: The capabilities of generative AI models may make them powerful automatic detectors of harmful or discriminatory content online, thereby improving online content moderation and reducing reliance on human content moderators who often experience psychological impacts as the result of viewing harmful content.4

-

Access to information and education: Generative AI may improve the information ecosystem by providing access to high quality information in a speedy manner and in an accessible format. This may support the educational experience, particularly if models are adaptable to the capacities or learning styles of different users such as children or neurodivergent users.

-

Accessibility solutions: Advances in technology, such as mobility devices and disability assistive products and services, enable increased quality of life for persons with disabilities. Generative AI may also provide greater accessibility options to persons with disabilities through use cases that address their specific needs.

-

Access to health: Generative AI may accelerate the development of health interventions, including drug discovery and access to health information that supports basic needs.

Company action

What frameworks can be used to assess generative AI?

Companies developing and deploying generative AI should do so in a manner consistent with their responsibility to respect human rights set out in the UN Guiding Principles on Business and Human Rights (UNGPs).

The UNGPs offer an internationally recognized framework (e.g., human rights assessment) and a well-established taxonomy (e.g., international human rights instruments) for assessing risks to people. Furthermore, the UNGPs provide an established methodology for evaluating severity of harm, prioritizing mitigation of harm based on severity, and navigating situations when rights are in tension. This enables companies to determine appropriate action for mitigating potential harms associated with their business operations.

Human rights frameworks may be used in combination with existing AI ethics guidelines, either industry-wide guidelines or individual company guidelines, to inform companies’ approaches to ethical generative AI development and deployment. Existing AI ethics frameworks include:

Does responsibility for addressing risks reside primarily with the developers or deployers of generative AI?

Companies across industries are deploying generative AI solutions, and the responsibility of mitigating risks resides with both developers and deployers of generative AI systems. Some risks may be best addressed by developers (e.g., risks associated with model creation) while other risks may be best addressed by deployers (e.g., risks associated with the application of the model in real life). Many risks will benefit from collaboration between developers and deployers (e.g., refining the model and improving guardrails in response to real world insights). BSR offers the following considerations.

Developers (e.g., technology companies)

-

Industry-wide collaboration: Companies developing generative AI models can engage in industry-wide collaborative efforts, such as Frontier Model Forum, to align on ethical and rights-respecting standards for developing, deploying, and selling generative AI models.

-

Generative AI-specific principles: Companies may develop new principles to guide ethical approaches to developing generative AI models or may apply existing AI principles and tailor or expand them to account for the unique challenges and risks posed by generative AI systems.

-

Ongoing due diligence: Conduct ongoing due diligence on evolving generative AI models and integrations with increased sophistication and capabilities. This includes human rights due diligence and model safety and capability evaluations, such as red teaming and adversarial testing.5

-

Research: Fund research into real and potential societal risks associated with the adoption of generative AI and continued explorations of responsible release. Research efforts should seek to design mitigations for the risks identified, including evolving methods for increasing model interpretability and accountability, reducing bias and discrimination in models, and protecting privacy.

-

Transparency and reporting: Publicly report on model or system capabilities, limitations, real and potential societal risks, and impacts on fairness and bias. Companies should also offer guidance on domains of appropriate and inappropriate use.

-

Customer vetting: Before providing off-the-shelf or customized generative AI solutions, vet potential customers to ensure their intended use case or possible end uses of the technology will not lead to harm.

Deployers (e.g., both technology and non-technology companies)

-

AI ethics principles: Companies seeking to implement generative AI solutions should ensure that they have robust AI ethics principles if they do not already. AI ethics principles should account for the specific risks posed by generative AI, as well as AI more generally. Principles should set standardized, right-respecting approaches for the adoption of AI systems across all areas of the business.

-

Human rights due diligence: Prior to implementing customer-facing or internal generative AI solutions, conduct human rights due diligence to identify the potential harms that may result from the deployment of the technology.

-

Mitigation: Implement guardrails to address risks surfaced in the human rights due diligence process. For customer-facing or employee tools built on generative AI, ensure there is a reporting channel for customers and employees to report issues with model performance.

-

Consent: Obtain user or customer consent before training models on their data or content. Give users or customers the option to opt out of having their data or content used to train models (for example, allowing artists to opt out of having their artwork used to train image generating models).

-

Continuous monitoring and evaluation: Regularly assess training datasets and the performance of generative AI systems to evaluate them for fairness and bias. Integrate feedback from reporting channels on an ongoing basis.

Blog | Wednesday September 13, 2023

Five Ways to Activate Your Board on Nature

Nature-related issues represent a material risk for most companies. It’s important to recognize actions and immediate steps boards can take to address the growing concerns.

Blog | Wednesday September 13, 2023

Five Ways to Activate Your Board on Nature

Preview

An Evolving Disclosure Landscape

The signing of the Global Biodiversity Framework in December 2022 was a turning point in global action on nature. Nature-related topics have been long addressed by business—including issues such as water use, deforestation, climate change and pollution—and were on the forefront of environmental action for much of the last decade. The framework put nature on the world stage, and raised a call to action that magnified expectations from business, not only to act on these issues but to address them in a holistic and strategic manner.

Concurrently, guidance and expectations around both target setting and disclosure have formalized through credible frameworks such as those developed by the Science Based Targets Network and Taskforce for Nature-Related Financial Disclosures. Businesses are now expected to not just address and mitigate risk but contribute to a nature-positive future.

The recent Corporate Sustainability Reporting Directive (CSRD) also addresses both climate change (ESRS E1) and biodiversity (ESRS E4), respectively, as material issues in their reporting standards that will require disclosure about material impacts, risks and opportunities in relation to sustainability matters and enterprise value. This will have governance implications whereby boards will need to oversee impacts, risks and opportunities, how this relates to the company’s strategy and business model, current and future processes to understand impacts, and sign off on these disclosures. On biodiversity specifically, this includes impact drivers of biodiversity loss such as climate change, land use, water use, pollution, land degradation and impacts on the state of species.

Expectations for Boards Addressing Nature and Biodiversity Loss

There are several reasons why nature needs to be on the agenda for corporate boards. Three primary examples include:

-

Nature is essential for achieving climate goals. The importance of Board engagement on climate is well established, and only increasing in importance. Businesses need to ensure they are enabling climate-competent Boards, as increasing external pressures drive accountability for Boards to be engaged and oversee climate goals and transition plans. To achieve these goals and demonstrate meaningful and impactful progress, companies must integrate nature and nature-based solutions (NbS) into their strategies and related interventions. Given the nascency of using NbS to quantify progress toward climate goals in Scope 3, oversite and sufficient competency by the Board on nature is critical to ensuring credibility, minimizing risk, and maximizing impact.

-

Large businesses will be accountable for addressing material nature issues. Recent developments in nature-related legislation, regulation, and voluntary frameworks such as TNFD and regulatory frameworks such as CSRD have elevated the imperative for Boards to understand, and engage with, nature related issues. This includes understanding which pressures on nature are material for the business, both in terms of dependencies on nature/business risk and outward impacts on people and the environment. Assessing the materiality of nature pressures throughout the value chain will be required for many companies, and for those issues deemed material, science-driven target setting, appropriate action, and related disclosure should be undertaken. Oversight and engagement by Boards on related strategies- particularly as they relate to long-term planning (and notably, growth projections) – will be meaningful as companies begin to act and disclose in line with compliance requirements.

-

Negative impacts on nature can carry substantial direct and indirect costs. Direct business impacts to the loss of nature and biodiversity include water scarcity, rising insurance premiums, loss of viable and productive agriculture land, supply chain instability, resource scarcity, among others. Just as important are indirect costs related to impacts on nature. These include human rights, biodiversity loss, shifting workforces, loss of cultural land, and other areas that corporations will need to manage if they are not proactively addressing their negative impacts to nature. As Boards consider other sustainability issues, they will need to consider how their business activities related to nature impacts are indirectly driving risk on other important social and environmental issues.

Recommendations for Business

Given the clear imperative of Board engagement on nature, what actions can Boards take now to help them engage, and prepare for required oversight? There are a few immediate steps that can be enacted:

-

Build up competency and educate Board members on nature. The issues addressed under a comprehensive nature strategy are nuanced and often complex, and Boards should understand how key terms are defined, the connection between different terms and concepts, and how they are applicable to the business and varying business lines. For instance, does the Board know the nuance between biodiversity and nature, do they understand the connection between the two terms, and how each impacts the business?

-

Ask how climate plans are incorporating nature. Push management to articulate how nature will be integrated into climate strategies – particularly climate transition plans and pathways to net zero. If the business has not yet begun to do this – ask for it.

-

Understand long term impacts and related resourcing constraints. Consider undergoing scenario exercises to help illuminate how nature impacts may cause long term risk and conflict with growth plans. Utilize results of a nature assessment to highlight the most material nature issues and build scenarios to help garner insights to ultimately feed into strategy – but also build awareness on where resources may need to be deployed and scaled up to appropriately mitigate identified risks (e.g., traceability, supply shifts, data collection, etc.)

-

Prepare for disclosure. Disclosure related to increased regulatory requirements such as the CSRD is coming as soon as January 2024 for disclosure in 2025. Ask management how they are preparing to meet the requirements, and what the roadmap is to fill gaps in data or other key information sources that might not be available.

-

Ask the ‘right’ questions to ensure credibility. Gain a clear understanding of how current or past work on related issue areas were developed. Were targets based on science, and is there intent to do formal science-based target setting through SBTN? Have you conducted a nature assessment? Are nature impacts mapped to human rights impacts? Is there a plan to address biodiversity as it relates to upstream supply chains? Is there consideration on how TCFD-aligned disclosure (if completed) will intersect with TNFD disclosure? These questions, and more, are critical to evaluating the credibility and efficacy of a nature strategy.

Both climate and nature are considered material topics for all companies, and subject to oversight on disclosure on the related risks, opportunities, and impact on the value chain. Boards have an important role to identify and understand these issues in terms of process and content. BSR’s Nature and Board practices are partnering with members to upskill and address the role of nature in company strategies and collaborating with stakeholders to advance urgent action in creating a just and sustainable world. For more information on activating the Board on nature-related issues, contact BSR’s Nature team.

People

Sophie Tripier

Sophie works with BSR member companies across industries on sustainability management, including stakeholder engagement, materiality, strategy, and reporting, among other topics. She is part of BSR’s Future of Reporting collaboration, a group of companies sharing reporting best practices and tracking the regulatory landscape in the US and EU. Sophie brings…

People

Sophie Tripier

Preview

Sophie works with BSR member companies across industries on sustainability management, including stakeholder engagement, materiality, strategy, and reporting, among other topics. She is part of BSR’s Future of Reporting collaboration, a group of companies sharing reporting best practices and tracking the regulatory landscape in the US and EU.

Sophie brings years of operational expertise as an in-house sustainability management specialist across diverse industries such as hospitality, corporate services, logistics, manufacturing, etc. She also has experience in managing CSR foundations and engaging with NGOs and NPOs. Prior to that, she worked for EcoVadis, focusing on the analysis and sustainability scoring of supply chains.

Sophie holds a BSc in Anthropology and Environmental Management and an MSc in Environmental Science from The University of Western Australia. She is also an accredited Climate Fresk Facilitator.